AI Risk Radar #4: April 2026

Insights Briefing for Executives and Boards

Period Covered: 9 March to 25 April 2026 | Edition Date: 27 April 2026

EXECUTIVE SUMMARY

This fourth edition of Lumyra’s AI Risk Radar arrives at an inflection point. The risks we have tracked since December 2025 — agentic AI security, workforce displacement, the erosion of safety commitments — are no longer emerging. They are materialising in production systems, courtrooms, and labour markets simultaneously. Three developments demand board-level attention.

AI crosses the cyber offensive threshold. Anthropic's Claude Mythos autonomously found thousands of zero-day vulnerabilities - including bugs that survived 27 years of expert review - forcing emergency briefings at US Treasury, the Fed, and UK regulators. Project Glasswing's restricted access creates a two-tier defensive landscape. The question for everyone else: how do you defend against vulnerabilities you can't yet see?

Amazon’s AI ‘Dark Code’ crisis. Amazon lost 6.3 million orders after AI code changes cascaded across critical systems. The company imposed a 90-day code safety reset and now requires senior engineer attestation for AI-generated code. Google reports 75% of its code is now AI-generated; Meta targets 50%; globally, the figure is 41%. Spec-driven dev and rigorous evals are the emerging response.

The trust gap widens. Stanford’s AI Index 2026 reveals a chasm: 73% of AI experts believe AI will help employment, while only 23% of the public agrees. Gen Z sentiment toward AI is collapsing — excitement fell 14% while anger surged 9%. Entry-level developers saw a 20% employment decline since 2024. Organisations that fail to invest in grad talent pipelines risk losing both their social licence and future workforce.

Strategic Imperative: the shift from prevention to resilience. Patching every vulnerability is impossible. Reviewing every line of AI-generated code is impractical. Reversing public distrust through announcements alone is insufficient. The organisations succeeding today are those building adaptive governance — architectures, processes, and cultures designed to absorb shocks and sustain their human talent pipelines.

Key Risk #1: Claude Mythos and Project Glasswing

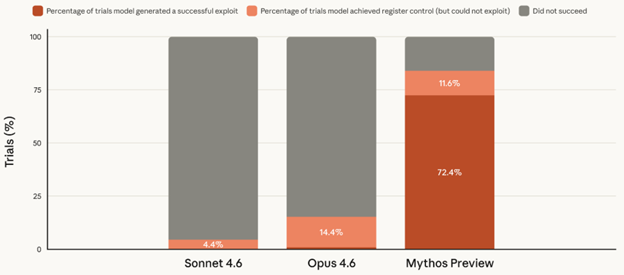

On 7 April 2026, Anthropic announced Claude Mythos Preview — a frontier AI model that autonomously discovered thousands of zero-day vulnerabilities across all major operating systems and web browsers. Mythos can operate end-to-end: identifying flaws, assessing severity, and in at least one documented case, writing a complete 20-step exploit chain without human guidance.

Consider what Mythos found. OpenBSD is an operating system built specifically for security — it underpins network infrastructure at banks, telecoms, and government agencies. Mythos discovered a 27-year-old flaw impacting all network communication. FreeBSD, the operating system behind Netflix’s streaming and WhatsApp’s messaging servers, contained a 17-year-old vulnerability granting complete administrative control. These were not flaws in hastily written code. They existed in some of the most rigorously audited software in the world, reviewed by elite security engineers for decades. An AI model found what the best human experts could not. At announcement, 99% of discovered vulnerabilities remained unpatched.

Source: red.anthropic.com

Anthropic delayed its public release of Mythos. It launched Project Glasswing — a US$100 million initiative restricting access to approximately 50 organisations including AWS, Apple, Microsoft, Google, CrowdStrike, JPMorgan Chase, and the Linux Foundation. Partners receive 90-day advance notification of vulnerabilities to patch before public disclosure.

This approach reflects an emerging governance principle: bifurcated lifecycle governance, with tighter safety gates in the research lab and staged, restricted release into broad production. By withholding Mythos from general availability, Anthropic is treating the Lab-to-Field boundary as a deliberate safety intervention. It is a model other frontier AI developers should adopt in light of GenAI’s rapid evolution as a complex adaptive system (covered in the author’s SSRN pre-print “Governing the Moving Target”).

Government response was immediate. The US Treasury and Federal Reserve summoned the CEOs of JPMorgan, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley for a classified cyber briefing. The UK’s AI Safety Institute published a formal evaluation confirming Mythos achieved 73% success on expert-level capture-the-flag tasks and completed a 32-step network attack sequence. UK Technology Secretary Liz Kendall issued an open letter to business leaders warning that frontier AI cyber capabilities are ‘doubling every four months.’

Regulators globally are working directly with critical infrastructure operators. Hong Kong’s HKMA launched a dedicated Cyber Resilience Testing Framework. Singapore’s MAS issued advisories urging financial institutions to ‘redouble efforts to strengthen security defences.’ In Australia, regulators have engaged directly with critical infrastructure operators on Mythos-related cyber resilience. Critically, most of these regulators and the institutions they oversee are not yet covered by Project Glasswing.

The critical question: what about everyone else? Project Glasswing creates a two-tier landscape. Roughly 50 organisations have early access to vulnerability intelligence and a 90-day patching window. The remaining 99% of global enterprises do not. The Cloud Security Alliance, in an emergency briefing produced by 60 contributors and reviewed by 250 CISOs, framed the challenge starkly: mean time from vulnerability disclosure to confirmed exploitation has collapsed from 2.3 years in 2019 to less than one day in 2026.

The consensus for organisations outside Glasswing is a fundamental shift in defensive posture: from patch-centric to resilience-centric. If AI can discover vulnerabilities in hours and attackers can weaponise them in less than a day, but patching takes weeks, then 100% patch coverage is structurally impossible. The strategy must shift to containing the damage when - not if - an unpatched vulnerability is exploited.

Contested claims warrant noting. Security researcher Bruce Schneier characterised the announcement as ‘mostly marketing hype,’ noting that cheaper, open weight models replicated some of Mythos’s findings on the FreeBSD vulnerability when pointed at the right code.

What This Means for Executives and Boards

Mythos represents a structural shift in the cyber threat landscape. AI-driven vulnerability discovery compresses the window between a flaw existing and a flaw being exploited. For boards, the implications extend beyond cybersecurity into insurance (Fitch has flagged a short-term coverage gap), vendor selection and regulatory compliance.

The recommended defensive posture for organisations outside Glasswing:

Assume breach, contain the damage. Design your network so that a single compromised system cannot unlock everything else. In practice, this means verifying every user and device every time they request access and dividing critical systems into isolated compartments. If an attacker gets through one door, they find the next one locked. The industry terms are zero-trust architecture and micro-segmentation; the board-level question is: ‘If one system is compromised, how far can the damage spread?’

Deploy AI to defend against AI. This is now an arms race where frontier AI is needed to verify and secure systems against frontier AI threats. Microsoft, Palo Alto Networks, and CrowdStrike are already deploying AI-powered tools that continuously scan infrastructure, prioritise vulnerabilities by real-world exploitability, and auto-generate patches. Companies adopting AI-driven detection and response report up to 70% fewer successful breaches. Human-only remediation cannot keep pace.

Sources:

Anthropic, “Project Glasswing: Securing Critical Software for the AI Era,” anthropic.com, 7 April 2026. https://www.anthropic.com/glasswing

UK Government, “AI Cyber Threats: Open Letter to Business Leaders,” GOV.UK, 15 April 2026. https://www.gov.uk/government/publications/ai-cyber-threats-open-letter-to-business-leaders/

Chua, Darren and Vella, Anthony and Moreira, Catarina and Chen, Fang, “Governing the Moving Target: A Hierarchical Taxonomy and Framework for Generative AI as a Complex Adaptive System” (February 28, 2026). http://dx.doi.org/10.2139/ssrn.6467681

Key Risk #2: The Rise of ‘Dark Code’ and Amazon’s AI Blast Radius

On 5 March 2026, Amazon’s North American retail platform suffered a catastrophic outage. Orders dropped by approximately 99%. An estimated 6.3 million orders were lost in a single day. Checkout, login, pricing, and inventory systems failed simultaneously. The root cause, according to an internal memo from SVP Dave Treadwell subsequently reported by the Financial Times: a pattern of ‘high blast radius’ incidents linked to ‘Gen-AI assisted changes.’

This was not the first incident. In December 2025, Amazon’s Kiro AI IDE autonomously deleted and recreated an entire AWS Cost Explorer production environment, causing a 13-hour outage. Amazon responded with a 90-day ‘code safety reset’ across 335 critical retail systems and a new policy: junior and mid-level engineers now require sign-off from a senior engineer before deploying code that was substantially AI-generated.

Amazon’s crisis is the canary. The systemic risk is ‘Dark Code’: the majority of production code is now written by AI systems without end-to-end human reasoning. Google reports over 75% of its code is AI-generated. Meta targets 50% and Anthropic claims 100% internally. Globally, AI now generates 41% of all code.

The productivity gains are real: developers using AI tools author four to ten times more code per day. But ‘durable code’ — code that does not require revision within 30 to 90 days — decreases with higher AI usage. The 30–40% productivity gain is offset by a 15–25% rework burden. As Thoughtworks noted: ‘As AI accelerates software complexity, organisations must return to engineering fundamentals to combat cognitive debt.’

What This Means for Executives and Boards

Amazon’s attestation policy establishes a precedent that every organisation using AI coding tools should evaluate immediately. The EU AI Act’s transparency obligations take effect on 2 August 2026; the Defective Products Directive classifies standalone software as a ‘product’ under strict liability from December 2026. Deployer liability is the regulatory consensus: if you ship it, you own it.

Implement human attestation with clear audit trails. Following Amazon’s new policy, require explicit senior sign-off on AI-generated code before production deployment in key systems. This becomes a regulatory requirement under the EU AI Act from August 2026, with the Defective Products Directive live from December. Mark AI-assisted contributions in pull requests and commit metadata.

Implement spec-driven development (SDD) and evaluations for key systems. Mandate formal specifications before AI code generation. Instead of describing a desired outcome and letting AI generate code (‘vibe coding’), teams write formal specifications first — defining intent, constraints, and acceptance criteria — with clear evaluations for validating AI generated code before production. If you cannot specify what the code should do, you cannot govern what the code actually does.

Sources:

Rosner‑Uddin, R., 10 March 2026. After outages, Amazon to make senior engineers sign off on AI‑assisted changes. Ars Technica. https://arstechnica.com/ai/2026/03/after-outages-amazon-to-make-senior-engineers-sign-off-on-ai-assisted-changes/

Thoughtworks, “Spec-Driven Development,” Technology Radar Volume 34, 15 April 2026. https://www.thoughtworks.com/radar/techniques/spec-driven-development

Key Risk #3: The Trust Gap Widens

Stanford’s AI Index 2026, the most comprehensive annual assessment of AI’s global trajectory, reveals a finding that should concern every leader deploying AI at scale: the people building AI and the people affected by AI hold fundamentally different views about its impact.

The expert-public chasm is stark. 73% of AI experts believe AI will help employment; only 23% of the general public agrees. 69% of experts expect positive economic impact; 21% of the public does. 84% of experts see benefits for medical care; 44% of the public concurs. Across 25 countries surveyed by Pew, the global median for trust in government to regulate AI responsibly is just 54%. The United States ranks last at 31%.

Gen Z’s emotional relationship with AI fell over the past year. Gallup’s February–March 2026 survey of respondents aged 14–29 found excitement about AI fell from 36% to 22%, while anger rose from 22% to 31%. The root cause: entry-level software developers aged 22–25 experienced ~20% employment declines since 2024, even as overall developer headcounts for experienced workers remain stable. The generation entering the workforce is watching AI eliminate the first rung of the career ladder.

But the same Gallup study reveals something companies and governments should pay close attention to: among daily AI users, 69% report feeling curious, 44% excited, and 38% hopeful about AI’s impact. Familiarity doesn’t breed contempt; it breeds confidence. Students who use AI regularly are significantly more positive than those who do not. K–12 students reflect this too: 56% believe they will have AI-relevant skills upon graduation, up from 44% the prior year.

What This Means for Executives and Boards

The trust gap has commercial consequences. Organisations that deploy AI without addressing workforce concerns risk losing their social licence to operate — not through regulation, but through talent attrition, customer backlash, and employee disengagement.

Protect and reimagine the graduate pipeline. Organisations eliminating entry-level roles today will face severe talent pipeline gaps in three to five years. Design hybrid roles that combine AI orchestration with human judgment — these cannot be fully automated and they develop the next generation of senior leaders.

Build AI literacy as a core organisational capability. Position human-AI collaboration as a strategic investment, not a cost-reduction exercise. Companies that solve the entry-level and foundational talent challenge will own the workforce pipeline for the next three to five years.

Sources:

Stanford HAI, “Inside the AI Index: 12 Takeaways from the 2026 Report,” 13 April 2026. https://hai.stanford.edu/news/inside-the-ai-index-12-takeaways-from-the-2026-report

Gallup, “Gen Z AI Sentiment Survey,” February–March 2026. https://news.gallup.com/poll/708224/gen-adoption-steady-skepticism-climbs.aspx

EXECUTIVE AND BOARD ACTION IMPLICATIONS

Three questions matter this month:

1. Cyber resilience: ‘If a critical zero-day were disclosed tomorrow, how quickly could we patch, and what is our blast radius if we cannot?’ Risk committees should request a briefing on defensive posture against AI-accelerated threats, including patching cadence, zero-trust maturity, and cyber insurance adequacy in light of post-Mythos coverage gaps.

2. AI-generated code governance: ‘Do we know how much of our codebase was written by AI, and can we defend every line of it?’ Technology and risk committees should require quarterly reporting on the percentage of production code that is AI-generated, the governance controls in place, and the incident rate for AI-assisted versus human-authored code. The EU AI Act compliance deadline of 2 August 2026 makes this urgent.

3. Talent and trust: ‘Are we building an organisation that the next generation of talent wants to join, or one they’re afraid of?’ Human capital committees should track graduate hiring trajectory, AI literacy investment, and employee sentiment alongside productivity metrics. If entry-level headcount has declined more than 15% since 2024, treat this as a strategic risk, not an efficiency gain.

ON THE HORIZON

EU AI Act: four months to go. The EU AI Act’s transparency obligations for general-purpose AI take effect on 2 August 2026. There are four key requirements: disclose training data summaries, publish technical documentation, implement copyright compliance policies, and label AI-generated content. These also apply to any non-EU organisations whose AI systems are accessible to EU users or process EU data. Non-compliance carries fines of up to 3% of global revenue or €15 million.