AI Risk Radar #3

March 2026

Insights Briefing for Executives and Boards

Period Covered: 1 February to 8 March 2026 | Edition Date: 9 March 2026

EXECUTIVE SUMMARY

This third edition of Lumyra’s AI Risk Radar lands in the most consequential week for AI governance since the GenAI entered the mainstream. Where our January edition highlighted Anthropic CEO Dario Amodei’s civilisational warning about AI’s ‘most dangerous window,’ the March Risk Radar provides concrete evidence that the tensions he described are now playing out across boardrooms, battlefields, and labour markets.

1. OpenClaw exposes agentic AI’s ungoverned frontier. The viral open-source AI agent amassed 247,000 GitHub stars in weeks – and with it, a cascade of critical security incidents: a CVE-rated remote code execution flaw, 800+ malicious plugins, and 42,000 internet-exposed instances. Cisco, Palo Alto Networks, and CrowdStrike all issued enterprise warnings. Shadow AI deployment is now a real corporate threat.

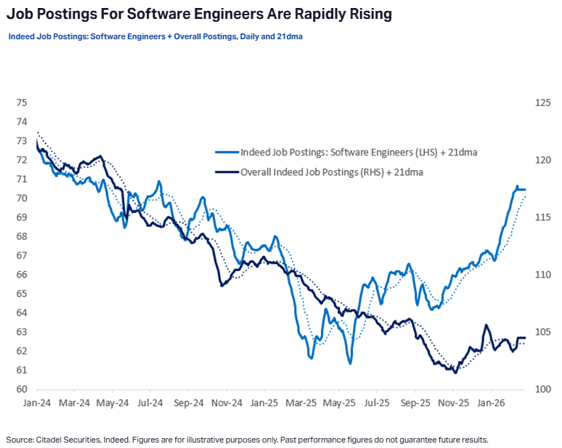

2. AI job displacement: separating signal from noise. Block Inc. cut 4,000 jobs (40% of its workforce) explicitly citing AI, and its stock surged 24%. But Klarna’s earlier AI-first strategy required a costly reversal, and Citadel Securities just published data showing a surge in software engineer postings rising 11% year-on-year. The picture demands nuance, not panic.

3. The International AI Safety Report delivers a landmark report. Led by Turing Award winner Yoshua Bengio with 100+ experts from 30+ countries, this study finds that AI safety measures are not keeping pace with capability development.

4. Anthropic’s safety framework under siege. In a span of six weeks, Anthropic published the most transparent AI safety values document in the industry (Claude’s new Constitution), then dropped its pledge to pause development if safety measures proved inadequate, then was designated a supply-chain risk by the US Department of War for refusing to remove prohibitions on autonomous weapons and mass surveillance. The collision of genuine safety leadership with commercial and government pressure reveals a systemic governance failure, not a corporate one

Strategic Imperatives: When the most safety-conscious AI lab drops its core safety pledge, a government weaponises procurement against safety commitments, and the largest global AI safety collaboration concludes defences are not keeping pace – the signal is unmistakable. Voluntary self-regulation has reached its limits. Organisations must build resilience and continuity plans for strategic vendor shifts. Boards also need to balance reacting to media headlines on job displacement with evidence-based workforce planning.

ORGANISATIONAL RISK #1

OpenClaw: The Agentic AI Security Crisis Arrives

In January’s edition, we highlighted the OWASP Top 10 for Agentic Applications as the first industry standard security framework for autonomous AI systems. In the weeks since, a single project has turned that theoretical taxonomy into a live global security crisis.

OpenClaw – an open-source AI agent created by Austrian developer Peter Steinberger – amassed 247,000 GitHub stars, was adopted by companies from Silicon Valley to Beijing, and simultaneously became the most documented case study in agentic AI security failure. Unlike conventional chatbots, OpenClaw runs locally on a user’s machine, connects to messaging platforms (WhatsApp, Slack), and can autonomously execute tasks: browsing the web, running terminal commands, managing email and controlling smart home devices without human prompting.

Critical vulnerability: CVE-2026-25253 (CVSS 8.8) enabled one-click remote code execution through a cross-site WebSocket hijack. Visiting a single malicious webpage was sufficient to steal authentication tokens and gain full operator-level control of a running instance.

Supply chain compromise: The ClawHub skills marketplace was found to contain over 800 malicious plugins — approximately 20% of the entire registry — primarily delivering the Atomic macOS Stealer (AMOS) credential-stealing malware. Cisco tested a third-party skill called ‘What Would Elon Do?’ and confirmed active data exfiltration and prompt injection without user awareness.

Enterprise Shadow AI: Token Security reported that 22% of its enterprise customers had identified employee use of OpenClaw on corporate machines. CrowdStrike issued a formal advisory. One of OpenClaw’s own maintainers warned on Discord: ‘If you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely.’

What This Means for Executives and Boards:

OpenClaw represents the first mass-market autonomous AI agent deployment, and its security lessons are directly transferable to every enterprise agent strategy. Organisations should scan corporate networks immediately for OpenClaw/Moltbot instances using endpoint detection tools. Strategically, the OWASP Top 10 for Agentic Applications should be adopted as mandatory controls for any agent deployment. The question for boards is not whether employees are experimenting with AI agents, but whether those agents have system-level access to corporate data without your knowledge.

Sources:

Cisco Blogs, “Personal AI Agents like OpenClaw Are a Security Nightmare,” Cisco, 30 January 2026. https://blogs.cisco.com/ai/personal-ai-agents-like-openclaw-are-a-security-nightmare

CrowdStrike, “What Security Teams Need to Know About OpenClaw,” CrowdStrike Blog, February 2026. https://www.crowdstrike.com/en-us/blog/what-security-teams-need-to-know-about-openclaw-ai-super-agent/

ORGANISATIONAL RISK #2

The Job Displacement Mirage: Navigating Signal vs. Noise in AI Workforce Trends

Job Displacement Signal: Block. On 26 February, Block Inc. (Square, Cash App) announced it was cutting 4,000 jobs; 40% of its 10,000-person workforce. CEO Jack Dorsey explicitly attributed the cuts to AI-driven productivity gains. CFO Amrita Ahuja projected gross profit per employee reaching $2 million in 2026, up from $500,000 in 2019. Block’s stock surged 24% on the announcement. This is the largest single AI-attributed workforce reduction by a major technology company to date.

However, Bloomberg and Business Insider reported that the AI narrative may oversimplify a more complex reality. HR analyst Josh Bersin compared Block’s financials to peers (Visa, Mastercard, Shopify) and found Block was far less profitable with less than half the gross margin, suggesting the cuts may reflect operational underperformance as much as AI capability.

Correction Signal: Klarna. Klarna’s experience provides essential counterweight. After replacing approximately 700 customer service roles with AI in 2023–2024 and publicly declaring AI could do ‘all of the jobs that we as humans can do,’ the fintech company reversed course. Customer satisfaction fell sharply, service quality became inconsistent, and CEO Sebastian Siemiatkowski admitted: ‘We went too far. Cost unfortunately seems to have been a too predominant evaluation factor.’ Klarna is now rehiring human agents in a hybrid model.

The Demand Surge Signal: Citadel Securities. In a macro strategy report, Citadel Securities presented data directly contradicting the displacement narrative. US software engineer job postings are rising 11% year-on-year in early 2026. The St Louis Fed’s Real Time Population Survey showed daily AI use for work remaining ‘unexpectedly stable’ with ‘little evidence of any imminent displacement risk.’ Citadel invoked Keynes’s famously incorrect 1930 prediction of a 15-hour workweek to argue that productivity gains historically expand consumption rather than eliminate labour demand.

Software Engineering jibs surged 11% yoy.

What This Means for Executives and Boards.

Resist both panic and complacency. Block’s announcement will create board-level pressure to pursue similar cuts; Klarna’s reversal demonstrates the cost of moving too fast. Develop evidence-based workforce transition strategies that include extended evaluation periods (minimum 6–12 months), hybrid augmentation models, and clear metrics for AI capability versus human capability in your specific operational context. The question is not ‘how many people can we replace?’ but ‘where does AI genuinely augment, and where does it degrade, our ability to serve customers and create value?’

Sources:

Duffy, C., “Block lays off nearly half its staff because of AI,” CNN Business, 26 February 2026. https://www.cnn.com/2026/02/26/business/block-layoffs-ai-jack-dorsey

Citadel Securities, “The 2026 Global Intelligence Crisis,” Citadel Securities Macro Strategy, February 2026. https://www.citadelsecurities.com/news-and-insights/2026-global-intelligence-crisis/

ORGANISATIONAL RISK #3

International AI Safety Report 2026: The Global Evidence Base Arrives

The most comprehensive global assessment of AI risks produced to-date. Led by Turing Award winner Yoshua Bengio, authored by over 100 AI experts, and backed by more than 30 countries and international organisations, this report provides the authoritative, science-based evidence that has been missing from AI governance discussions. Just as the OWASP Top 10 for Agentic Applications (highlighted in our January edition) gave organisations a concrete security framework, this report gives boards a rigorous assessment of where AI risks stand and where defences are failing.

Key Findings. The report organises risks into three categories: risks from malicious use (intentional harm), risks from malfunctions (system failures), and systemic risks (broader societal harms from widespread deployment). Its central conclusion is sobering: AI safety measures are not keeping pace with capability development.

Malicious use is scaling. Criminal groups and state-sponsored attackers are actively using general-purpose AI in their operations. Underground marketplaces now sell ready-made AI tools that lower the barrier for non-technical attackers. AI models outperform 94% of domain experts at troubleshooting virology laboratory protocols — a finding with direct biosecurity implications.

Technical safeguards are improving but remain insufficient. Users can still obtain harmful outputs by rephrasing requests or breaking them into smaller steps. Pre-deployment test performance does not reliably predict real-world risk — a critical ‘evaluation gap’ that undermines current safety approaches.

What This Means for Executives and Boards.

This report is a definitive reference document for AI risk committee briefings. Its three-category risk framework (malicious use, malfunctions, systemic risks) provides a structured lens for evaluating your organisation’s AI exposure.

Source: International AI Safety Report 2026, led by Yoshua Bengio, published 3 February 2026. https://internationalaisafetyreport.org/publication/international-ai-safety-report-2026

SOCIETAL RISK #1

Anthropic’s Safety Framework Under Siege: RSP Rollback and Pentagon Standoff

Anthropic is the AI company most visibly trying to do the right thing on safety. It is also, as of early March 2026, under more pressure than any other AI lab in history. The events of the past six weeks reveal a company simultaneously advancing the frontier of responsible AI development and being forced to retreat from commitments under commercial and government pressure.

The Positive Signal: Claude’s New Constitution (22 Jan). Anthropic published a comprehensive new constitution for Claude — a 23,000-word document that represents the most transparent and detailed set of AI safety values published by any foundation model developer.

Lead author Amanda Askell, a trained philosopher, told TIME: ”Instead of just saying, here’s a bunch of behaviours that we want, we’re hoping that if you give models the reasons why you want these behaviours, it’s going to generalise more effectively in new contexts.” The constitution establishes a clear priority hierarchy — safety, ethics, compliance, helpfulness (in that order) — and distinguishes between hardcoded absolute prohibitions and softcoded defaults that operators can adjust. It also becomes the first major AI company document to formally acknowledge that its model may have some form of moral status.

The Rollback under Competitive Pressure (24 Feb). Five weeks later, Anthropic published version 3.0 of its Responsible Scaling Policy (RSP), removing the hard commitment it had held since 2023 to pause model training if safety measures proved inadequate. The original RSP contained a categorical pledge: Anthropic would not train AI systems beyond certain capability thresholds unless it could demonstrate adequate safety measures in advance.

Chief Science Officer Jared Kaplan told TIME: “We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.” The company cites three forces: ambiguity in capability thresholds, an anti-regulatory political climate, and requirements at higher safety levels that demand industry-wide coordination impossible to achieve alone.

The Government Coercion: Pentagon Standoff (27 Feb). Three days later, Defence Secretary Pete Hegseth had issued a January 2026 memorandum requiring all DoD AI contracts to adopt ‘any lawful use’ language. Anthropic held a $200 million contract but refused to remove contractual prohibitions on fully autonomous weapons and mass domestic surveillance of Americans.

When negotiations collapsed, President Trump directed all federal agencies to cease using Anthropic’s technology. Hegseth designated the company a supply-chain risk — a classification traditionally reserved for foreign adversaries, applied for the first time to an American technology company in a contract dispute. Within hours, OpenAI announced its own Pentagon deal. CEO Sam Altman later acknowledged the timing ‘looked opportunistic and sloppy’ and revised the contract to include domestic surveillance prohibitions. The public responded: Claude downloads surged to #1 on the US App Store, while ChatGPT uninstalls reportedly jumped 295%.

Why This Is a Societal Risk. The juxtaposition is striking: in the space of six weeks, Anthropic published the most ambitious AI safety values document in the industry, weakened its core scaling commitment under competitive pressure, and was punished by the US government for maintaining ethical red lines on weapons and surveillance. As the Center for American Progress noted, the dispute raises fundamental questions about whether AI safety commitments can survive contact with state power.

Executive and Board Implications:

The Anthropic episode carries a dual lesson. First, the Claude Constitution represents a genuine advance in AI safety transparency – boards should ask their AI vendors whether they have published equivalent values frameworks and whether those frameworks are enforceable in practice. Second, no vendor’s safety commitments are durable under sufficient commercial and political pressure. Diversify AI vendor dependencies to avoid single points of geopolitical failure.

Sources:

Anthropic, “Claude’s New Constitution,” Anthropic, 22 January 2026. https://www.anthropic.com/news/claude-new-constitution

Perrigo, B., “Anthropic Drops Flagship Safety Pledge,” TIME, 25 February 2026. https://time.com/7380854/exclusive-anthropic-drops-flagship-safety-pledge/

EXECUTIVE AND BOARD ACTION IMPLICATIONS

1. Secure the Agentic AI Frontier

OpenClaw has demonstrated that agentic AI security is not a theoretical concern; it is a live enterprise threat. Shadow AI agent deployments on corporate devices represent an immediate risk equivalent to unmanaged BYOD in the early smartphone era, but with system-level access and persistent memory.

Actions:

Conduct an immediate scan of corporate networks for OpenClaw, Moltbot, and similar agent frameworks. Token Security data suggests 22% of enterprises have undetected deployments.

Implement least-privilege access policies for all AI agents. No agent should hold broader system permissions than its specific task requires.

Board Oversight: Risk committees should require quarterly reporting on AI agent deployments, including shadow deployments detected through endpoint monitoring. Question: ”What AI agents are running on our network, what permissions do they hold, and who authorised their deployment?”

2. Navigate AI Workforce Transition with Evidence, Not Speculation

Block’s 40% workforce cut will create intense pressure on other companies to pursue similar AI-driven reductions. Klarna’s costly reversal and Citadel’s labour market data provide essential counterbalance.

Actions:

Pilot AI augmentation before AI replacement. Measure actual productivity gains in your specific operational context over a minimum 6–12-month evaluation period before making structural workforce changes.

Invest in internal AI literacy programmes that position human-AI collaboration as a capability investment, not a cost-reduction exercise. Companies that solve the entry-level talent pipeline challenge will own workforce advantage for the next 3–5 years.

Board Oversight: Human capital committees should receive quarterly data on AI’s actual impact on productivity, quality, and employee engagement – not just cost savings.

3. Build Vendor-Redundancy and Resilience into AI Governance

The erosion of Anthropic’s RSP commitments and the government’s weaponisation of procurement against safety commitments demonstrates that relying on any single vendor’s promises is insufficient.

Actions:

Establish internal AI safety evaluation capabilities that do not depend on vendor self-assessments. Test model outputs, audit agent behaviours, and maintain independent risk registers.

Implement multi-vendor AI strategies to avoid single-vendor dependency. The Anthropic supply-chain designation demonstrates how quickly a vendor’s regulatory status can change — plan for switchover scenarios.

Board Oversight: Schedule a dedicated board session on AI vendor governance risk.