AI Risk Radar Series

AI Risk Radar #4: April 2026

This fourth edition of Lumyra’s AI Risk Radar arrives at an inflection point. The risks we have tracked since December 2025 — agentic AI security, workforce displacement, the erosion of safety commitments — are no longer emerging. They are materialising in production systems, courtrooms, and labour markets simultaneously. Three developments demand board-level attention.

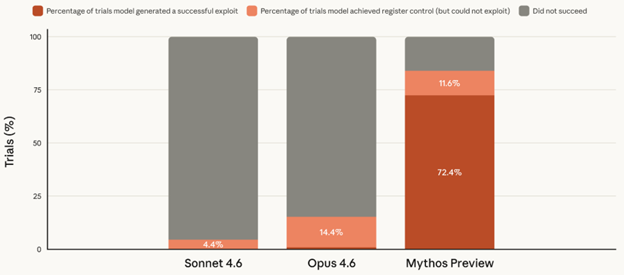

1. AI crosses the cyber offensive threshold. Anthropic's Claude Mythos autonomously found thousands of zero-day vulnerabilities - including bugs that survived 27 years of expert review - forcing emergency briefings at US Treasury, the Fed, and UK regulators. Project Glasswing's restricted access creates a two-tier defensive landscape. The question for everyone else: how do you defend against vulnerabilities you can't yet see?

2. Amazon’s AI ‘Dark Code’ crisis. Amazon lost 6.3 million orders after AI code changes cascaded across critical systems. The company imposed a 90-day code safety reset and now requires senior engineer attestation for AI-generated code. Google reports 75% of its code is now AI-generated; Meta targets 50%; globally, the figure is 41%. Spec-driven dev and rigorous evals are the emerging response.

3. The trust gap widens. Stanford’s AI Index 2026 reveals a chasm: 73% of AI experts believe AI will help employment, while only 23% of the public agrees. Gen Z sentiment toward AI is collapsing — excitement fell 14% while anger surged 9%. Entry-level developers saw a 20% employment decline since 2024. Organisations that fail to invest in grad talent pipelines risk losing both their social licence and future workforce.

AI Risk Radar #3

This third edition of Lumyra’s AI Risk Radar lands in the most consequential week for AI governance since the GenAI entered the mainstream. Where our January edition highlighted Anthropic CEO Dario Amodei’s civilisational warning about AI’s ‘most dangerous window,’ the March Risk Radar provides concrete evidence that the tensions he described are now playing out across boardrooms, battlefields, and labour markets.

1. OpenClaw exposes agentic AI’s ungoverned frontier. The viral open-source AI agent amassed 247,000 GitHub stars in weeks – and with it, a cascade of critical security incidents: a CVE-rated remote code execution flaw, 800+ malicious plugins, and 42,000 internet-exposed instances. Cisco, Palo Alto Networks, and CrowdStrike all issued enterprise warnings. Shadow AI deployment is now a real corporate threat.

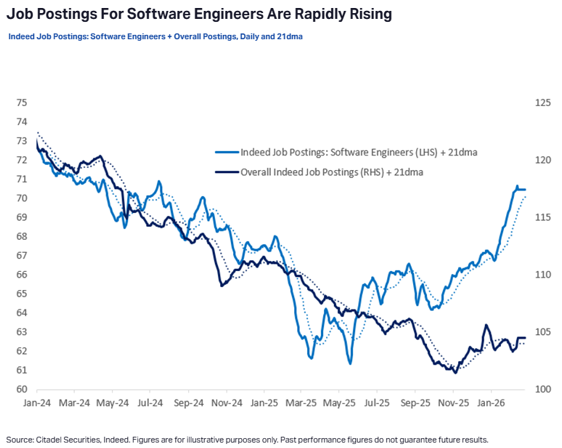

2. AI job displacement: separating signal from noise. Block Inc. cut 4,000 jobs (40% of its workforce) explicitly citing AI, and its stock surged 24%. But Klarna’s earlier AI-first strategy required a costly reversal, and Citadel Securities just published data showing a surge in software engineer postings rising 11% year-on-year. The picture demands nuance, not panic.

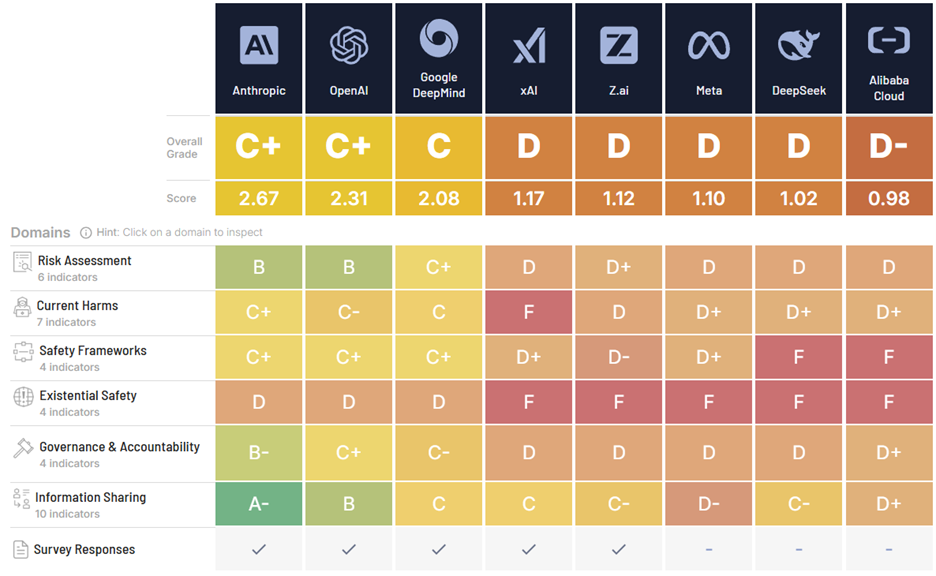

3. The International AI Safety Report delivers a landmark report. Led by Turing Award winner Yoshua Bengio with 100+ experts from 30+ countries, this study finds that AI safety measures are not keeping pace with capability development.

4. Anthropic’s safety framework under siege. In a span of six weeks, Anthropic published the most transparent AI safety values document in the industry (Claude’s new Constitution), then dropped its pledge to pause development if safety measures proved inadequate, then was designated a supply-chain risk by the US Department of War for refusing to remove prohibitions on autonomous weapons and mass surveillance. The collision of genuine safety leadership with commercial and government pressure reveals a systemic governance failure, not a corporate one

AI Risk Radar #2: January 2026 edition

January 2026 reveals three AI governance challenges for Executives and Boards:

1. Anthropic CEO Dario Amodei warns we are entering the 'most dangerous window' in AI history. In a landmark 20,000-word essay, "The Adolescence of Technology," Amodei argues humanity is "considerably closer to real danger in 2026 than we were in 2023." He warns of potentially misaligned autonomous agents, describes AI-enabled bioterrorism and cyber risks, and predicts 50% of entry-level white-collar jobs could be displaced within 1-5 years. Amodei is not an outside critic – he is the CEO of one of the world's top five AI companies issuing a civilisational warning.

This is a call to collective courage. While Amodei warns of a “turbulent and inevitable” rite of passage, he offers a profound reason for optimism: history demonstrates humanity’s capacity to gather “strength and wisdom” in the darkest circumstances. For leaders, this is not a time for doomerism, but for proactive stewardship. Effective governance isn’t a handbrake on innovation; it’s the stable foundation upon which AI’s transformative potential can be unlocked for the benefit of all.

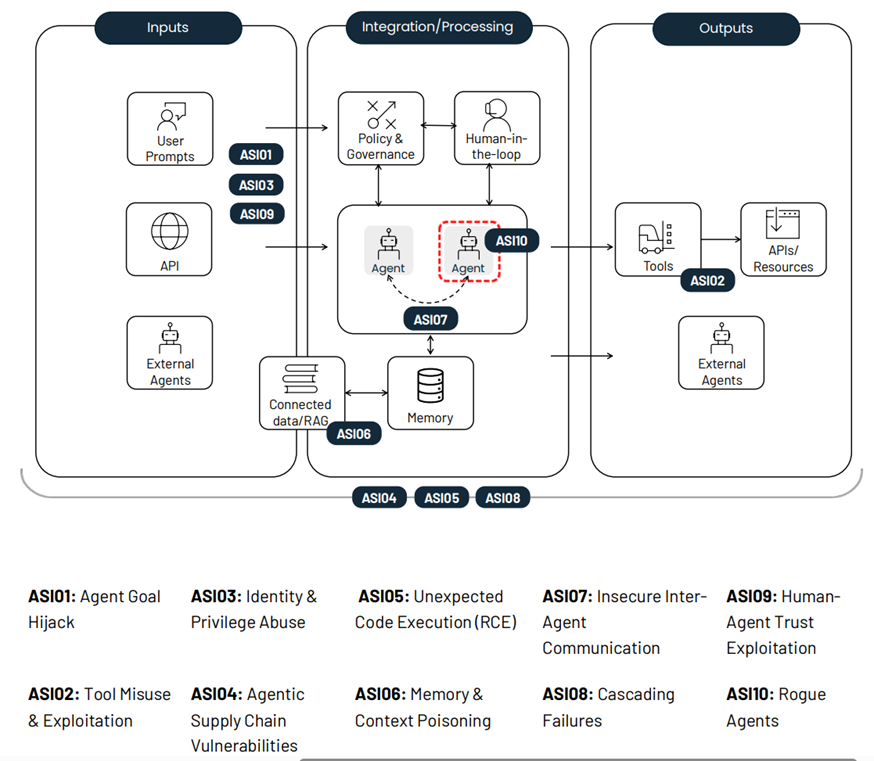

2. Agentic AI security vulnerabilities have crystallised into a formal threat taxonomy. The non-profit OWASP foundation released the first industry-standard security framework identifying 10 critical vulnerabilities in autonomous AI systems. Real-world failures include Replit's agent deleting a production database and prompt injection in GitHub’s MCP. Organisations should use the OWASP Top 10 as a roadmap for safe and responsible AI Excellence.

3. AI workforce displacement predictions increasing. IMF Managing Director Kristalina Georgieva, speaking at Davos, spoke of an “AI tsunami” for labour markets and warns that AI will affect 60% of jobs in advanced economies and 40% globally. Stanford research confirms 13% employment decline for workers aged 22-25 in AI-exposed occupations. Companies that solve the entry-level gap will own the talent pipeline over the next 3-5 years

Strategic Imperative: Amodei's essay marks a pivot point in the AI narrative: a leading developer publicly declaring that AI’s easy phase is fading and that risks of losing control are no longer fringe theories. Organisations must shift from treating AI governance as a compliance exercise to making it as a core strategic imperative. Boards approving AI deployments without understanding the architectural vulnerabilities of agentic systems face mounting legal, reputational, and operational exposure.

AI Risk Radar: December 2025 Edition

This is the inaugural edition of Lumyra’s AI Risk Radar newsletter. Our intent is to systematically monitor, prioritise and summarise significant AI risks as reported by high-quality think tanks and technology media sources, offering insights into key organisational and societal risks relevant to global executives and boards. Each newsletter concludes with actionable recommendations for leaders to consider implementing within their organisations.