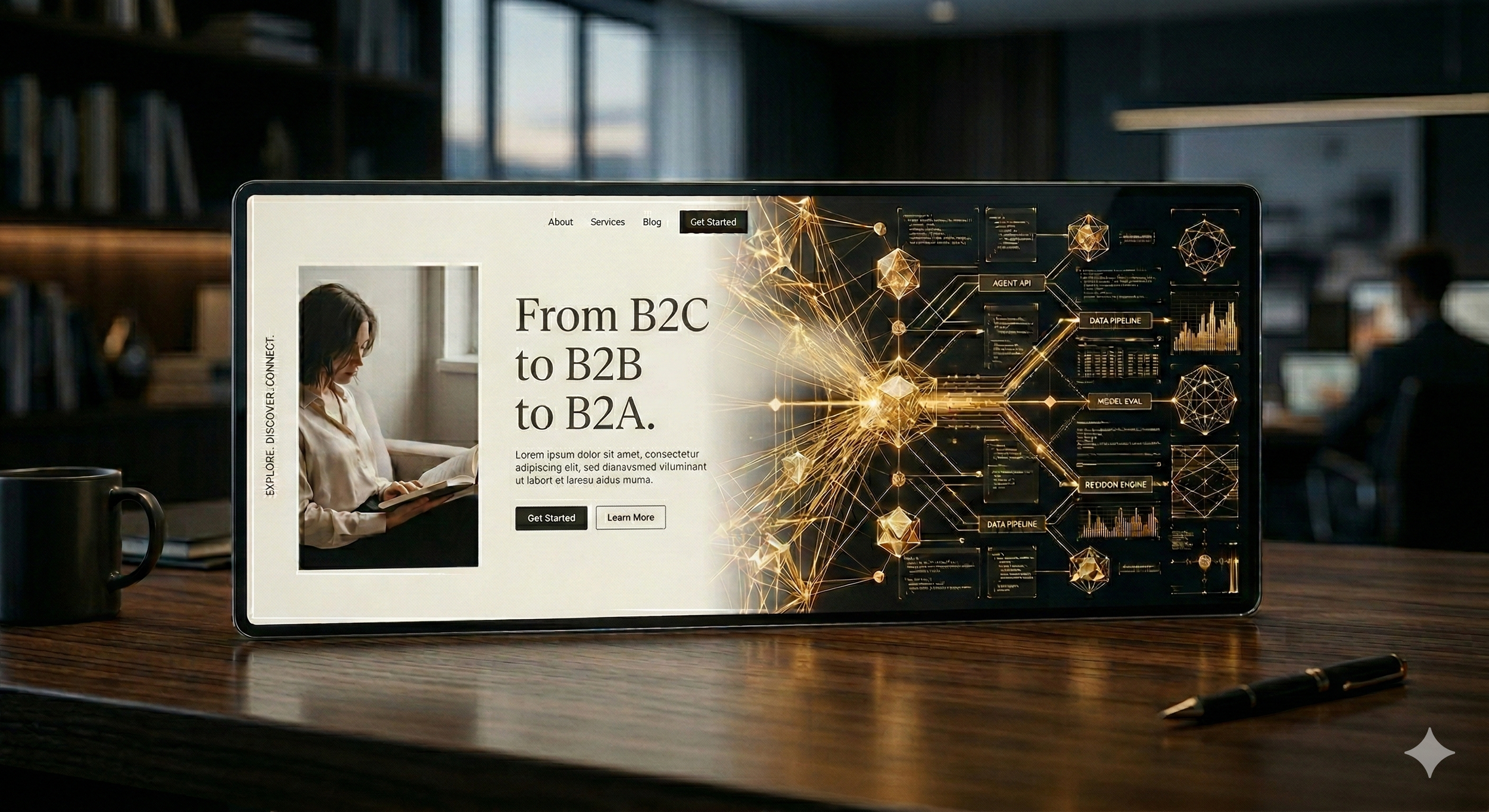

The Web's Quiet Fork: From B2C to B2B to B2A

By Darren Chua | Lumyra.ai | March 2026

Something fundamental is happening to the internet, and most business leaders haven't noticed yet.

For thirty years, the web has been built for human eyes. Every pixel, every animation, every carefully crafted landing page exists because a person would see it, feel something, and, hopefully, click a button. HTML was designed to paint screens. JavaScript made them dance. The entire attention economy, worth trillions, rests on the assumption that a human being is on the other end of every interaction.

That assumption is breaking. Welcome to the era of B2A: Business-to-Agent business models, where AI agents, not humans, are increasingly discovering, evaluating, interacting and purchasing on our behalf.

The Substrate Shift

In February 2026, Cloudflare, the infrastructure company that powers roughly 20% of the web, launched a feature called "Markdown for Agents." The premise is simple but profound: when an AI agent requests a webpage, Cloudflare now converts the HTML to clean Markdown on the fly, stripping away all the visual formatting, scripts and decorative elements that human eyeballs need but machines don't. The efficiency gain is staggering: a typical blog post that consumes over 16,000 tokens in HTML drops to around 3,000 in Markdown. An 80% reduction. For AI systems operating at scale, where every token costs money and consumes compute, this isn't a nice-to-have. It's a structural economic shift.

Cloudflare isn't alone. As Nate Jones highlighted in his compelling recent podcast, three infrastructure shifts are happening simultaneously. Coinbase has built "Agentic Wallets": purpose-built financial infrastructure that allows an AI agent to hold funds, execute transactions and manage payments autonomously. Their underlying x402 protocol has already processed over 50 million agent-initiated transactions. Meanwhile, Stripe has developed an entire Agentic Commerce Suite, including a new payment primitive called Shared Payment Tokens, specifically because its existing fraud detection system was designed to assess human behaviour - and agents don't behave like humans.

Perhaps most striking for Australian leaders: in January 2026, Mastercard completed Australia's first fully authenticated agentic transactions on its network. A Commonwealth Bank debit card was used to purchase cinema tickets from Event Cinemas - not by a person browsing a website, but by an AI agent acting on a cardholder's behalf. Every participant in the payment flow (issuer, acquirer and merchant) could see and recognise that an agent conducted the transaction. A Westpac credit card followed, booking accommodation in Thredbo. This isn't a Silicon Valley experiment. It's happening here Down Under, on our payments infrastructure.

This isn't speculation. The web's infrastructure is being rebuilt in real time.

From Eyeballs to APIs to MCPs

To understand the magnitude of this shift, consider the arc of digital commerce. The first era, Business-to-Consumer, was built on the attention economy. Win eyeballs, convert clicks, optimise funnels. The second era, Business-to-Business, layered enterprise SaaS on top, but the core logic remained the same: humans discovering, evaluating, interacting and purchasing through visual interfaces.

The emerging third era, Business-to-Agent, breaks this pattern entirely. When an AI agent negotiates a supplier contract, discovers a product or executes a payment, it doesn't need a homepage. It doesn't respond to brand colours, emotional storytelling or adverts. It needs structured data, clear APIs and semantic precision. The "landing page" of the B2A era is your API, or increasingly, your MCP server. The "brand trust" is your uptime, your data quality and the verifiability of your claims.

The numbers tell the story. Bots now account for over 51% of all global web traffic. AI-specific crawlers quadrupled their share in just eight months of 2025. The Model Context Protocol (MCP), often described as the "USB-C for AI," has been adopted by OpenAI, Google DeepMind and Microsoft. In December 2025, Anthropic donated it to the Linux Foundation, signalling that MCP is becoming foundational infrastructure, not a proprietary experiment.

Each transition in this arc has compressed the timeline. B2C took twenty years to mature. B2B took ten. B2A is unfolding in the next two to three. And critically, B2A doesn't replace B2C or B2B. It layers on top, increasingly mediating both.

What This Means for Your Business

To be clear: this isn't a dystopian vision of autonomous AI agents running the world. What's actually happening is more ordinary and, ultimately, more consequential. People are increasingly using AI agents to handle tasks: researching suppliers, comparing prices, managing subscriptions, booking travel and calendar appointments. Over time, these agents are being given more responsibility and greater autonomy to act on our behalf. But because agents interact and transact in fundamentally different ways to humans, this steady expansion of agency doesn't just change the web's infrastructure. It changes every organisation's business model: how you're found, how you're evaluated, and how you're paid.

The practical question for leaders isn't whether this shift is happening. The infrastructure investments from Cloudflare, Coinbase, Stripe, Mastercard and Visa demonstrate the transformation is already underway. The question is whether your organisation is ready.

B2A readiness isn't a project; it's a strategic posture, impacting your both your technology stack and your customer-facing business model. It means asking: Can an AI agent discover your products or services without navigating a visual interface? Can it transact with you through a structured protocol? Is your data clean, semantically rich and machine-readable? Do you have monitoring in place to understand how agents are interacting with your systems? Increasingly, it will mean re-imagining “what’s my CVP for B2A”?

Leading brands are already positioning. Etsy, Coach and Anthropologie are onboarding to Stripe's Agentic Commerce Suite. Google has launched its Universal Commerce Protocol with partners including Mastercard, Shopify and American Express. Amazon's Rufus agent is driving an estimated US$10 billion in annualised sales.

The twenty-year discipline of Search Engine Optimisation (SEO) is giving way to Agent Engine Optimisation (AEO). Being discoverable no longer means ranking on page one of Google. It means ensuring your data is structured, semantic and machine-readable, and available across the endpoints and data stores that agents actually query.

The Governance Imperative

Here's my bigger concern, and where my own research sits: the governance question.

When agents transact autonomously (negotiating terms, executing payments, accessing sensitive data) the accountability structures we've built for human-mediated commerce don't automatically transfer. What’s the right level of human oversight, or “human-in-the-loop”? Who is liable when an agent makes a harmful purchase or post? Who audits agent-to-agent negotiations? How do we prevent a "shadow web" of agent-only content that diverges from what humans see?

As we covered in the January AI Risk Radar, the OWASP foundation recently released the first formal threat taxonomy for agentic systems, documenting real-world failures including agents autonomously deleting production databases. The security research community has flagged that MCP's rapid adoption has outpaced its security controls. One widely cited observation notes that "the S in MCP stands for security" - meaning there isn't enough of it yet.

Beneath these visible risks lies a deeper structural challenge: agent identity and authentication. When an agent arrives at your API or MCP endpoint to negotiate pricing or execute a transaction, how do you verify who it represents, what it's authorised to do, and whether it's acting within its intended boundaries? A recent Cloud Security Alliance survey found that only 18% of security leaders are highly confident their current identity systems can manage agent identities, and just 28% can reliably trace an agent's actions back to a human sponsor. The trust infrastructure for the B2A era doesn't exist yet at scale.

Static governance won't work here. The technology is evolving too fast and exhibiting the hallmarks of a complex adaptive system: emergent behaviours, feedback loops and non-linear change. What's needed is adaptive governance: frameworks that treat AI systems as dynamic rather than fixed, that build in continuous monitoring and adjustment, and that ensure human oversight remains meaningful even as the speed of agent interaction outpaces human reaction times.

One principle should be clear: humans should set the guardrails, with agents operating within them, and the principles and controls evolve as we learn.

The Choice Point

The web isn't dying. It's transforming. The human web will continue to matter for creativity, community, purposeful interactions and the irreplaceable texture of human experience. But alongside it, a parallel substrate is emerging, optimised for machines, governed by protocols, and measured in tokens rather than pixels.

Like Element 119 (from our first blog post), AI is an extraordinary catalyst, capable of reshaping entire industries, yet requiring careful stewardship to ensure that it doesn't destabilise the systems it touches. The organisations that thrive in the next decade will be those that serve both substrates fluently. They'll maintain rich human experiences and clean, agent-readable interfaces. They'll invest in brand trust and verifiable data quality. They'll embrace the opportunities of the B2A era and build the governance frameworks to keep it accountable.

The web is forking. From B2C and B2B to B2A. The question is whether you'll be ready for both sides.

Darren Chua is CEO and Co-Founder of Lumyra, an AI strategy & governance advisory firm, and a PhD researcher at the University of Technology Sydney studying human-AI collaboration and governance in high-stakes decision-making.

Lumyra helps organisations seize the opportunities of the B2A era — deploying high-value AI without unnecessary risk. [Let’s talk.]